)

Post-Quantum Cryptography

Author: Miguel Lopez

Reviewer: Adam Coates

Quantum computing changes the economics of cryptanalysis, not merely the speed of computation.

For organisations that depend on public-key cryptography, the result is strategic rather than abstract: long-lived confidential data, digital signatures, code-signing chains, public key infrastructure (PKI), and secure channels must be migrated to post-quantum cryptography (PQC) on a planned timeline rather than in response to a future shock.

Executive Summary

The central security consequence of scalable quantum computing is that it breaks the mathematical assumptions behind today's dominant public-key cryptography. Shor's algorithm can solve integer factorisation and discrete logarithms efficiently, which means that RSA and elliptic-curve cryptography (ECC) will not degrade gradually under a cryptographically relevant quantum computer (CRQC); they will become structurally insecure (Shor, 1997; Chen et al., 2016).

The most urgent risk is not only future decryption. It is the present-day capture of valuable encrypted traffic under a “harvest-now, decrypt-later" model. If data must remain confidential for longer than the time needed to migrate systems, then a future CRQC becomes a current planning problem rather than a remote technical possibility (Joseph et al., 2022; Global Risk Institute, 2025).

A practical response now exists. In August 2024, the National Institute of Standards and Technology (NIST) approved FIPS 203, FIPS 204, and FIPS 205, defining ML-KEM (Module-Lattice–based Key-Encapsulation

Mechanism), ML-DSA (Module-Lattice–based Digital Signature Algorithm), and SLH-DSA (Stateless Hash-Based Digital Signature Algorithm) as the first U.S. federal standards for post-quantum key establishment and digital signatures. NIST has also stated that FALCON (Fast Fourier Lattice-based Compact Signature over NTRU) will appear in FIPS 206, which remains in development (NIST, 2024a; NIST, 2025). These standards move post-quantum cryptography (PQC) from research into implementation and procurement.

For industry, the implication is clear. PQC migration should be managed as a multi-year resilience programme led jointly by security, enterprise architecture, engineering, procurement and risk. The immediate priorities are cryptographic inventory, data shelf-life assessment, vendor engagement, pilot deployments, and the design of crypto-agile platforms that can absorb standard and implementation changes without repeated system redesign (Chen et al., 2016; Joseph et al., 2022; CISA, 2023).

Why post-quantum cryptography matters now

Modern digital trust relies on public-key cryptography for secure sessions, software signing, identity certificates, VPNs, payment messaging, and machine authentication. While classical computers use bits as 0 or 1, quantum computers employ qubits that can represent several states at once via superposition and entanglement, enabling algorithms that can outperform classical methods for certain problems (Joseph et al., 2022). Quantum computing threatens traditional cryptographic assumptions by introducing powerful algorithms that undermine existing cryptographic hardness (Chen et al., 2016; Barrett-danes and Ahmad, 2025).

The threat is uneven. Asymmetric cryptography is the principal casualty because RSA, Diffie-Hellman and ECC depend on factorisation or discrete logarithms. Symmetric cryptography and cryptographic hashing are affected less severely. Grover's algorithm does not destroy symmetric encryption in the way Shor's algorithm destroys RSA and ECC, but it does reduce effective key strength, which reinforces the case for stronger parameter choices and disciplined key management (Chen et al., 2016; ENISA, 2021).

)

Figure 1 - The Bloch Sphere showing Geometrical Representation of a Qubit

Because the transition will take years, the relevant question is not whether a CRQC exists today. The relevant question is whether the organisation can complete migration before its protected data loses value. The 2025 Global Risk Institute survey reports that experts see a CRQC as quite possible within 10 years and likely within 15. That timeline is close enough to require immediate action in sectors that hold long-lived data or depend on long-lived trust anchors (Global Risk Institute, 2025).

Mosca and Piani (2019) proposed the “Mosca Inequality” framework, which sets out a simple model to assess quantum risk. Its central purpose is to help organisations determine whether their data will remain secure throughout the migration to post-quantum cryptography, by comparing the lifetime of data and migration time against the expected timeline for quantum computers to break current cryptographic schemes.

x + y > z

where:

x = required data confidentiality lifetime

y = time required to migrate systems to post-quantum cryptography

z = time until quantum computers can break current cryptography

If the combined duration of data sensitivity and migration effort exceeds the estimated timeline for quantum cryptanalysis, organisations are already exposed.

What quantum computing changes

The quantum threat should be understood by cryptographic function rather than by abstract discussion of computing power. Table 1 summarises the practical position.

This asymmetry matters commercially. Most urgent migration work concerns key establishment, digital signatures, PKI, certificate services and software/firmware trust chains. Symmetric encryption still requires attention, but it is generally an optimisation and parameter-management problem rather than a wholesale replacement problem (Chen et al., 2016; ENISA, 2021).

The operational consequence is that organisations should prioritise the systems where a future break would undermine authenticity or create irreversible exposure. These include certificate authorities, code-signing services, secure boot, HSM-backed signing keys, long-term archives, and any network protocol whose security assumptions still depend on quantum-vulnerable public-key algorithms (ENISA, 2021; Joseph et al., 2022).

The emerging threat landscape

The quantum threat is not uniform across all systems; rather, it disproportionately affects the public-key cryptographic mechanisms that underpin authentication, trust and identity on the internet.

Understanding the threat landscape requires examining how quantum attacks interact with real-world systems such as internet protocols, messaging infrastructure, and digital authentication frameworks.

Modern digital infrastructure relies heavily on public-key cryptography for establishing trust between systems. These cryptographic primitives are embedded in numerous protocols that enable secure communication across global networks.

For example, Transport Layer Security (TLS) - the protocol securing most internet traffic - uses asymmetric cryptography to authenticate servers and establish secure session keys before switching to symmetric encryption for data transmission (Chen et al., 2016). Similar mechanisms underpin virtual private networks, software distribution platforms and digital identity systems.

Case Study - Financial Institution

The financial sector presents a uniquely attractive target for adversaries due to the high economic value and long-term sensitivity of the data it processes. Financial institutions store vast quantities of confidential information, including transaction histories, personal identity data, intellectual property and regulatory records. These assets are protected primarily through encryption and digital signatures. If these mechanisms are compromised by quantum computing, adversaries could exploit several attack vectors.

Digital signatures verify the authenticity of financial transactions and ensure that they originate from legitimate sources. If an attacker could forge these signatures using a quantum computer, they could potentially impersonate financial institutions or authorise fraudulent transactions.

Financial institutions depend on cryptographically signed software updates to maintain the integrity of trading systems, banking applications and network infrastructure. Compromised digital signatures could allow attackers to distribute malicious software under the guise of trusted updates.

Many financial services rely on public-key certificates for identity verification, including authentication for APIs, payment gateways and internal systems. Quantum attacks against certificate infrastructure could allow adversaries to impersonate legitimate entities within financial networks.

These risks highlight why the quantum threat is considered not only a technical problem but also a systemic financial stability concern.

PQC, QKD and the current technical response

Post-quantum cryptography is the primary enterprise response because it can be deployed on classical hardware and integrated into existing software stacks. In contrast, quantum key distribution (QKD) is a specialised communications technology that can play a role in narrow contexts but does not replace general-purpose cryptography and still depends on authenticated channels. ENISA therefore treats PQC as the direct response to quantum cryptanalysis, while QKD remains supplementary rather than foundational for most enterprises (ENISA, 2021).

Research has produced several main families of PQC: lattice-based, code-based, hash-based and multivariate systems. Lattice-based schemes dominate current standardisation because they offer strong security-performance trade-offs and flexible implementation across key establishment and signature use cases. Hash-based signatures remain important where conservative assumptions and long-lived trust anchors matter, especially in firmware and software-signing contexts (Chen et al., 2016; ENISA, 2021).

The standardisation landscape is now materially clearer than it was even two years ago. NIST approved FIPS 203, FIPS 204 and FIPS 205 on 13 August 2024, covering ML-KEM, ML-DSA and SLH-DSA. NIST has also confirmed that FALCON was selected and will be published in FIPS 206, which remains in development. This is important because it means migration programmes no longer need to wait for conceptual clarity before beginning inventory, design and pilot work (NIST, 2024a; NIST, 2025).

Table 2 summarises the standards and guidance that matter most for immediate enterprise planning.

UK NCSC guidance adds practical detail to this landscape. It identifies ML-KEM and ML-DSA as the general-purpose algorithms most suitable for common enterprise use cases and recommends ML-KEM-768 and ML-DSA-65 as appropriate choices for most applications. It also positions hash-based signatures such as SLH-DSA, LMS and XMSS as better suited to slower, specialised use cases such as firmware and software signing, where large signatures are acceptable and conservative assumptions are valuable (NCSC, 2025b).

Performance, architecture and the real cost of migration

PQC is not a free substitution. The most visible architectural effect is growth in public keys, ciphertexts and signatures. That increase affects protocol messages, certificate chains, HSM capacity, network handshakes, storage footprints, update pipelines and log volumes. In other words, the migration is not just a cryptographic decision; it is an enterprise architecture decision (Joseph et al., 2022; NCSC, 2025b).

Real-world measurements already show this trade-off. Google and Cloudflare reported that a hybrid X25519Kyber768 key agreement increases the TLS ClientHello by roughly one kilobyte. For many connections the latency impact is small, but on some networks the larger packet can force an additional round trip. That result is not a reason to defer migration. It is a reason to test carefully, especially in performance-sensitive systems and bandwidth-constrained environments (Joseph et al., 2022).

Digital signatures create a second operational cost. Larger signatures affect code-signing packages, signed transaction records, firmware updates and archival systems. These effects matter in financial services, industrial control systems and embedded estates where bandwidth, storage, validation logic and device lifecycles are tightly constrained. The literature consistently identifies scalability and implementation readiness as primary blockers to deployment, especially in IoT and legacy environments (Barrett-danes and Ahmad, 2025; Ali et al., 2025).

This is why crypto-agility has moved from good practice to design requirement. NIST stressed the need for crypto agility early, and later enterprise guidance reinforced that point. Systems should be designed so algorithms, parameter sets, certificate formats and validation rules can change without rewiring the whole service. That means abstraction layers in cryptographic services, flexible PKI design, upgradeable dependencies, and disciplined inventories of where public-key cryptography is actually used (Chen et al., 2016; Joseph et al., 2022; CISA, 2023).

Hybrid deployments have a legitimate interim role, but they should not be treated as the end state. The NCSC notes that PQ/traditional hybrid schemes can ease backwards compatibility in protocols such as TLS and IKE, yet they also increase complexity and can require a second migration later. In particular, hybrid authentication within PKI is much harder than hybrid confidentiality. Where hybrid schemes are used, they should sit inside a framework designed to move cleanly to PQC-only operation once the ecosystem matures (NCSC, 2025b).

Key sector implications

The quantum transition is horizontal, but its business impact is sector-specific. Regulated sectors feel the pressure earliest because they combine long data-retention periods, strict integrity requirements, complex supplier ecosystems and high supervisory expectations.

Financial institutions depend heavily on digital signatures, PKI, encrypted channels and long-lived records. Payment messaging, customer authentication, code signing for trading and payment systems, archived transaction data, and supervisory reporting all depend on trust services that will eventually need PQC. The 2025 Global Risk Institute companion work on executive barriers to action shows that financial firms understand the threat but remain challenged by prioritisation, cost, regulatory uncertainty and dependency mapping. For this sector, a quantum programme should begin with the systems whose compromise would undermine market integrity or create irreversible exposure (Global Risk Institute, 2025; Barrett-danes and Ahmad, 2025).

In industrial and operational technology environments, the challenge is often lifecycle rather than theory. Systems are deeply embedded, slow to replace and frequently reliant on suppliers, bespoke protocols and hard-to-upgrade trust anchors. The NCSC makes clear that large organisations and operators of critical national infrastructure should work towards 2035 while prioritising systems that process sensitive data or manage critical communications. Long-lived hardware roots of trust deserve early attention because they are the hardest assets to remediate late in the cycle (NCSC, 2025a; NCSC, 2025b).

Firmware, secure boot, package signing and update integrity represent a distinct priority class. If signature systems are not upgraded in time, attackers with quantum capability could forge updates or undermine device trust. Hash-based signatures remain relevant here because their assumptions are conservative and their performance profile is often acceptable for signing workflows that are infrequent but security-critical (ENISA, 2021; NCSC, 2025b).

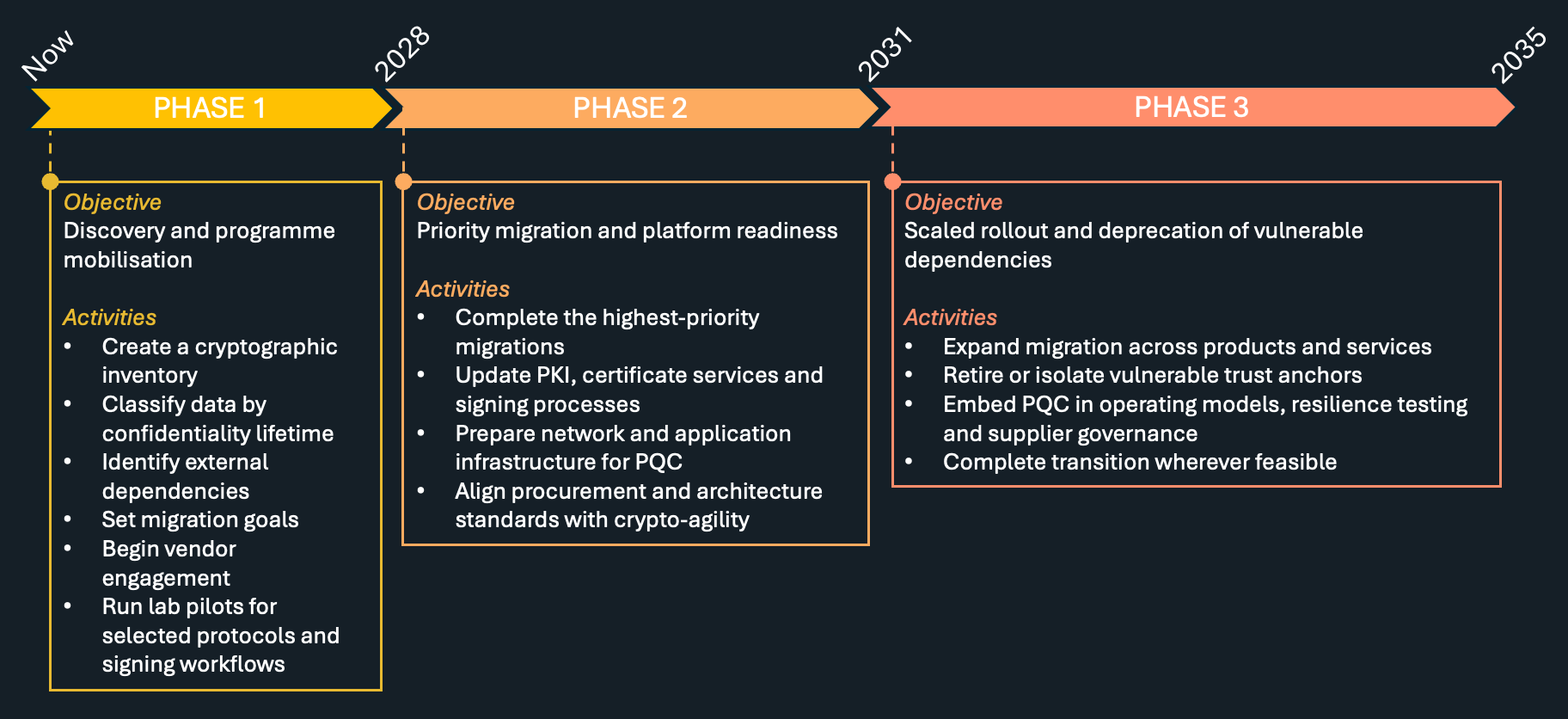

Phased roadmap for enterprise adoption

Current official guidance is converging around a staged migration model. The NCSC now recommends discovery and initial planning by 2028, completion of highest-priority migration activities and infrastructure readiness by 2031, and completion of migration by 2035. Those dates are guidance rather than law, but they provide a disciplined planning horizon for boards and programme offices (NCSC, 2025a).

The critical insight is sequencing. Inventory should begin with data shelf life, trust anchors, internet-facing services, code-signing chains and third-party dependencies rather than with a generic search for the word "encryption". CISA's roadmap makes the same point: organisations should inventory applications that use public-key cryptography, assess the lifecycle of data, test candidate standards in laboratory environments, and engage vendors early so procurement can be aligned with the migration path (CISA, 2023; CISA, 2026).

Recommendations

Treat PQC migration as an enterprise programme, not a narrow cryptography task. Board sponsorship, risk ownership and cross-functional governance are required.

Build a defensible cryptographic inventory. Without clear visibility of PKI, certificate use, signing systems, TLS termination points, HSM dependencies and long-lived data stores, prioritisation will fail.

Use data shelf life as a decision filter. Archives, regulated records, intellectual property and software trust chains should move ahead of lower-value use cases.

Pilot selectively, but pilot now. Use controlled environments to evaluate ML-KEM and ML-DSA in protocols, signing services and vendor products rather than waiting for full ecosystem maturity.

Design for crypto-agility. Abstract cryptographic services, reduce hard-coded assumptions about key and signature sizes, and ensure formats and trust services can evolve.

Make procurement quantum-aware. Ask suppliers when PQC support will arrive, which standards they will implement, what hybrid options exist, and how legacy trust anchors will be handled.

Conclusion

The quantum threat is no longer a speculative story about distant machines. It is now a question of whether organisations can complete a complex migration before their most valuable data, signatures and trust services become exposed. The publication of NIST's first PQC standards, the release of structured UK migration timelines, and the emergence of CISA procurement guidance together mean that the planning phase should already be underway (NIST, 2024a; NCSC, 2025a; CISA, 2026).

The organisations that act early will not simply be more secure. They will also be better governed. They will understand where cryptography matters, where supplier risk accumulates, which systems require redesign, and how to build crypto-agile architectures that absorb future standards evolution with less disruption. In that sense, post-quantum migration is not only a security programme. It is an opportunity to improve the quality of enterprise architecture and long-term cyber resilience.

Whether you are assessing your current cryptographic landscape, defining a migration roadmap, or implementing quantum-resistant algorithms, Spike Reply can guide you through every phase of this critical evolution. Our team of cybersecurity specialists combines deep expertise in cryptographic standards, risk assessment, and enterprise security architecture to help organisations navigate the post-quantum transition with confidence and minimal disruption. Contact us using the button below if you want to take the first step toward quantum-resilient security!